下图是树莓派各版本发布时间和差异对照图(截止Raspberry Pi 3 Model B)

继续阅读树莓派各版本发布时间和差异对照

亚马逊AWS IoT

平台定位

- AWS IoT是一款托管的云平台,使互联设备可以轻松安全地与云应用程序及其他设备交互。

- AWS IoT可支持数十亿台设备和数万亿条消息,并且可以对这些消息进行处理并将其安全可靠地路由至 AWS 终端节点和其他设备。应用程序可以随时跟踪所有设备并与其通信,即使这些设备未处于连接状态也不例外。

- 使用AWS Lambda、Amazon Kinesis、Amazon S3、Amazon Machine Learning、Amazon DynamoDB、Amazon CloudWatch、AWS CloudTrail 和内置 Kibana 集成的 Amazon Elasticsearch Service 等AWS服务来构建IoT应用程序,以便收集、处理和分析互连设备生成的数据并对其执行操作,且无需管理任何基础设施。

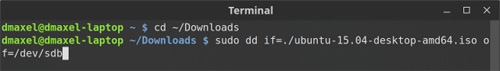

Ubuntu 16.04上查看dd命令的进度信息

在Ubuntu 16.04系统上执行如下命令

|

1 |

$ dd if=/dev/zero of=/tmp/zero.img bs=10M count=100000 |

的时候,可能会非常耗时,这个时候,如果让dd命令输出执行进度信息,会非常有用。

重新打开一个Shell,然后执行如下命令即可每秒输出一次进度信息

|

1 |

$ watch -n 1 pkill -USR1 -x dd |

参考链接

Linux中制作Ubuntu安装U盘

使用Polylang插件让你的WordPress站点支持多语言

如果你的WordPress站点是针对全球用户的,那就很有必要为它添加多语言支持,使用多语言插件Polylang就能很好地实现这个功能。你可以通过Polylang添加所需的语言,然后就可以将网站标题、文章、页面、分类、标签、菜单、小工具等等翻译为多种语言。

该插件可以根据浏览器的语言来自动切换到对应的语言版本。比如你可以像平常一样发布简体中文文章,然后为它添加一个英语版本(英语版的标题和正文内容),那么,如果一个使用英语浏览器的人访问你的网站,就会自动为他显示英语版本的文章。

注:该插件不会自动生成多语言版本的文章,需要你自己翻译

Polylang不仅支持单独的WordPress站点,还支持多站点模式。而且用户可以自己设置网站后台的语言。

参考链接

ROCm:AMD系开源HPC/超规模GPU计算/深度学习平台

ROCm的英文全称Radeon Open Compute platform,它是AMD在去年12月推出的一款开源GPU运算平台,目前已经发展到了1.3版本。MIOpen则是AMD为此开发的软件库,其作用是将程序设计语言和ROCm平台连接,以充分利用GCN架构。

本次发布的版本包括以下内容:

- 深度卷积解算器针对前向和后向传播进行了优化

- 包括Winograd和FFT转换在内的优化卷积

- 优化GEMM深入学习

- Pooling、Softmax、Activations、梯度算法的批量标准化和LR规范化

- MIOpen将数据描述为4-D张量 - Tensors 4D NCHW格式

- 支持OpenCL和HIP的框架API

- MIOpen驱动程序可以测试MIOpen中任何特定图层的向前/向后网络

- 二进制包增加了对Ubuntu 16.04和Fedora 24的支持

- 源代码位于https://github.com/ROCmSoftwarePlatform/MIOpen

- 参考文档

使用graphviz绘制流程图

前言

日常的开发工作中,为代码添加注释是代码可维护性的一个重要方面,但是仅仅提供注释是不够的,特别是当系统功能越来越复杂,涉及到的模块越来越多的时候,仅仅靠代码就很难从宏观的层次去理解。因此我们需要图例的支持,图例不仅仅包含功能之间的交互,也可以包含复杂的数据结构的示意图,数据流向等。

但是,常用的UML建模工具,如Visio等都略显复杂,且体积庞大。对于开发人员,特别是后台开发人员来说,命令行,脚本才是最友好的,而图形界面会很大程度的限制开发效率。相对于鼠标,键盘才是开发人员最好的朋友。

graphviz简介

本文介绍一个高效而简洁的绘图工具graphviz。graphviz是贝尔实验室开发的一个开源的工具包,它使用一个特定的DSL(领域特定语言): dot作为脚本语言,然后使用布局引擎来解析此脚本,并完成自动布局。graphviz提供丰富的导出格式,如常用的图片格式,SVG,PDF格式等。

卷积神经网络CNN总结

卷积神经网络依旧是层级网络,只是层的功能和形式做了变化,可以说是传统神经网络的一个改进。卷积网络在本质上是一种输入到输出的映射,它能够学习大量的输入与输出之间的映射关系,而不需要任何输入和输出之间的精确的数学表达式,只要用已知的模式对卷积网络加以训练,网络就具有输入输出对之间的映射能力。

清理DriverStore文件夹中的驱动程序

不少人发现,Windows使用一段时间后,C:\Windows\System32\DriverStore目录越来越大。对于还在使用128G或者更小容量的SSD用户来说,更是头疼。

DriverStore是Windows用来存放第3方驱动程序的,当你安装一个驱动时,对应的文件就会被拷贝到DriverStore。当你卸载驱动时,文件会从DriverStore中删除。如果你升级驱动时,Windows会保留旧版驱动,这样有问题时可以回滚。

更多DriverStore信息请见[https://msdn.microsoft.com/en-us/library/ff544868(VS.85).aspx]

很美好是不是?可惜现实总是有好些不如意的地方。

比如你有一块Nvidia显卡,老黄比较勤快,一个月发一两次新驱动,每个版本驱动安装后会占用几百兆空间。半年后你一看,DriverStore已经有好几G了。

于是你去百度/Google/Bing了一把:怎么给DriverStore减肥。搜出来的帖子大部分都是教你获取文件夹权限,删除。

然后你就照做了,恭喜你,你已经对系统造成了不可恢复的破坏,以后很可能会有些莫名其妙的错误。

其实Windows一直都有一个自带工具pnputil.exe,用这个可以列出DriverStore中的驱动,还可以删除。具体信息请见:[https://msdn.microsoft.com/en-us/library/windows/hardware/ff550419(v=vs.85).aspx]

但是,命令行的工具太麻烦了。。。怎么办?DriverStore Explorer来拯救你。

Windows磁盘文件分析软件SpaceSniffer

当电脑用久了之后,大多数人都会发现Windows会越来越慢,而且硬盘空间也慢慢地满了。

可让人苦恼的是,虽然想动手,但却不清楚到底是什么文件或文件夹在占用着你最多的空间。如果一个个文件夹逐个查看,对懒人来说无疑是一件噩梦。不过有了SpaceSniffer就轻松多了!它可以为我们统计出各个文件夹和文件的大小,然后以直观的区块、数字和颜色来显示硬盘上文件夹,文件大小。让你完整地了解你的硬盘空间到底是怎样被用掉的……